Latency is a notion little-known. Yet it strongly impacts the loading time of a website. In this blog post, I’m going to explain several things: what is the latency ? What are the effects to expect on the loading time of a page ? How to minimize its impact ?

What is latency ?

In a computer network, latency corresponds to the time the data take to go from a point A to a point B. In the case of a website, it is the minimum delay required for the data browsing the network between the web server and the web user’s browser, and vice versa. Latency is then related to the distance between the visitor and the server hosting the website.

NB: latency is absolutely not correlated to the bandwidth (ability to receive an amount of data over a certain period of time, expressed in Mo/s for example). Those two factors are determining, but there is no relation between the two of them.

Latency vs Bandwidth: the impacts

To go further, I wanted to measure the impact of both latency and bandwidth on the homepage of dareboost.com.

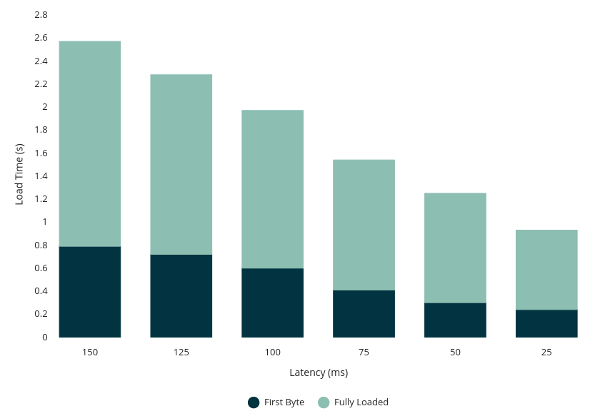

To do so, I have launched several tests with a steady bandwidth of 8.0/1.5 Mb and a latency moving from 150ms to 25ms:

Results: latency affects the loading time in a linear way. The weaker it is, the faster the website loads (we also have to notice the impact on the first octet).

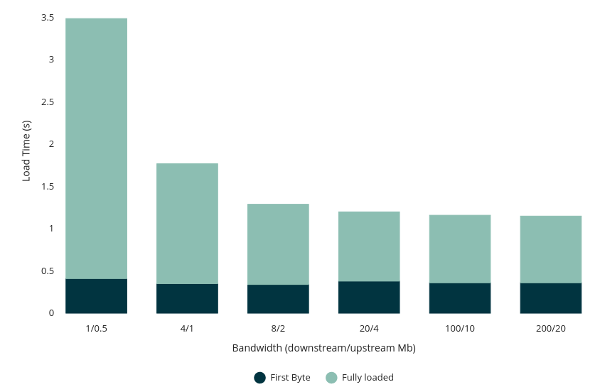

Second, in order to measure the impact of the bandwidth, I set the latency at 50ms and made the bandwidth evolve, in order to measure the loading time:

Observation: as expected, once a certain threshold is reached (dependant of the amount of data required to display of the page), increasing the bandwidth has no more impact (or almost) on the total loading time, because bandwidth is not anymore a bottleneck.

How to minimize the impact of latency?

Several tips allow you to limit the latency in order to avoid its adverse effects on the loading time. You should keep in mind 2 objectives: minimizing the back and forth with your servers (it means the number of times when the latency will apply), and reducing as much as possible the distance between those servers and your users (in order to reduce the latency value).

Limiting the number of roundtrips

In order to reduce the number of back and forth with your servers, the approach is simple: your page should call the fewest possible requests. Technically, you can do so by concatenating JavaScript or CSS files, the use of CSS sprites, the integration of small resources directly in the HTML code (inlining), or else thanks to the adoption of an efficient cache policy. By analysing your website on dareboost.com, have a look on « Number of requests » and « Cache policy ».

Let’s not forget that soon, HTTP 2 will spread and help us thanks to the multiplexing.

Reducing the distance between you servers and the web user

As told before, latency is related to the distance between a point A and a point B and is incompressible. Then, the only way to reduce the latency is to bring the points A and B closer. The CDNs (Content Delivery Network) were created to fulfil this goal: your contents are duplicated on servers spread around the world. The web user located in Paris gets his content from a server located in Paris, whereas a web user living in Sydney gets his from Sydney. If you are interested in this topic, we conducted a deeper study about the CDNs and the gain to expect.

Conclusion and recommendations

As mentioned earlier, latency is an important point for your website loading time. If you focus on an international audience, you should consider using a CDN to enhance the visit experience of web users by bringing your contents closer to them. In any case, make sure to minimize the number of requests required to the display of your page, especially for the part above the waterline.

Dareboost.com allows you to have all the tooling needed to test your website speed: geographical location of the test, setting of the bandwidth or simulation of a high latency!

Thank you, interesting information…

Total agreement this is why I find over use of OOP to be wrong.

Likewise CMS systems should be streamline to use a combined files for multiple components – this has the benefit of design consistency as well.

Thank you, i like dareboost.