As a reminder, the HTTPS (HTTP Secure) is, as its name indicates, the secure version of the HTTP protocol. The HTTPS aims at assuring confidentiality and security of exchanges: the communication being encoded, the protocol protects from eavesdropping, but also from data alteration.

For several years, its use has been more and more present (April 2015, 7 times more requests than in 2011 are made by HTTPS). Though by the end of the year, we should observe a massive intensification of this trend.

I invite you to discover why by reading this article, but also by approaching some of the stakes of implementation or performance.

Chrome: HTTP will clearly appear as non-secure.

In December 2014, the team in charge of the Chrome project security published a proposal to all of the web browser vendors: websites using the non-secure version of the protocol will have to be clearly marked as non-secure by the web browsers.

Until now, it was the opposite: a website using the HTTPS had a positive visual signal (represented most of the time by a padlock next to the URL).

![]()

In the case where a problem occurred on such a site, the padlock could be crossed to highlight it.

The approach will now be explicitly marking a security issue with a warning sign on every website using the conventional HTTP. Here are 2 sentences that sum up very well, in my opinion, the Chrome team’s motivations about this approach:

We know that active tampering and surveillance attacks, as well as passive surveillance attacks, are not theoretical but are in fact commonplace on the web. […] We know that people do not generally perceive the absence of a warning sign.

In other words, the security of web communications is a serious thing. A user will never notice that a padlock is absent: we need to go deeper and further. This is what Chrome is planning to do, with a progressive deployment plan scheduled for 2015.

We will highlight that things are really going beyond since the features using sensitive or private data might be unavailable on the HTTP protocol. Firefox recently joined the movement.

Firefox plans to depreciate the non-secure HTTP.

Last April 13th, one of the people in charge of security within Mozilla, Richard Barnes, started a debate for the creation of a depreciation plan of the protocol’s non-secure version.

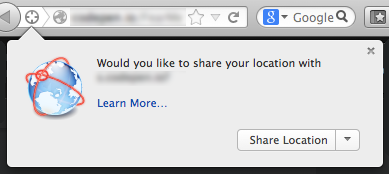

The plan leans on the actual W3C’s proposal about the concept of Powerful Features (e.g. geolocation), it means that these features should only be available within a trustworthy environment.

In the first part of the plan, only the new functionalities of the browser will be involved. Then during the second step, a bunch of existing features will be therefore integrated. It remains to determine them but they have already announced that everything related to the user’s data should be in it.

The web browsers are moving fast towards the HTTP depreciation, but it is not the only aspect to consider when thinking about migrating to HTTPS.

Google Search: HTTPS as a ranking signal

Since summer 2014, Google has been taking into account the HTTPS as a ranking signal. The search engine outlines that it remains a weak signal, compared to the value granted to the contents, for instance.

The announcement indicates that about 1% of research would be affected by this change. But be careful: Google also reminds in the same announcement, by several times, its firm commitment at encouraging a massive transition to HTTPS, concluding that this signal will probably get stronger in the future.

If not for user’s security, the transition to HTTPS is about to become essential for most of the websites; whether it is for the SEO, the use of some features of web browsers, or for avoiding a security warning harmful to their business.

However, some constraints come along with HTTPS.

Mixed Content, a flow affecting 11% of websites in HTTPS

A web page is composed of many resources (images, style sheets…), and even on a HTTPS site, some of them are sometimes loaded using the non-secure version of the protocol. It is referred as mixed contents.

This problem is frequent, and calls into question the security of exchanges.

After we conducted a survey about it in 2014, we found out that 11% of websites were facing it. To learn more, please read our complete article about it.

To sum up, several different levels of mixed contents exist, and some of them can lead to the blocking of resources by the web browsers. In any case, this kind of content causes a security warning, harmful to the user’s trust.

![]()

Generalisation of HTTPS should narrow the extent of the problem, since most of the resources should progressively be available in HTTPS.

However, to benefit from it, it will be necessary to update the references to these resources (from http:// to https:// in the path definition), which may represent heavy work for many sites.

Recent good news: a new CSP (Content Security Policy) instruction was created: upgrade-unsecure-requests. This new feature is being implemented on Firefox and Chrome right now. Then we can hope to benefit from it very soon !

This instruction will allow to request to the browser – on a HTTPS website – to try to request a resource over HTTPS, even if its path has been defined using HTTP. It allows to force all the resources in HTTPS without modifying every path. If the resource is not available over HTTPS, the browser falls back to the original version.

Some concerns about HTTPS, answered:

A certificate is costly

Some providers already give it for free, and this project should solve the issue at mid 2015: https://letsencrypt.org/

HTTPS is slow

The encoding of the communication has a cost but substantial improvements were done. For example the HTTP Strict Transport Security (HSTS) mechanism, which, thanks to a Strict-Transport-Security Header, allows to force the use of the HTTPS on a domain and to avoid possible redirection.

You will find further information about the HTTPS and the challenges raised by performance here: https://istlsfastyet.com/

On our website speed test tool, we have been advising you for several months to use HTTPS. Don’t hesitate to test your website to discover all of our other recommendations on www.dareboost.com!

Sources :

https://httparchive.org/trends.php?s=All&minlabel=Apr+15+2011&maxlabel=Apr+1+2015#perHttps

https://www.chromium.org/Home/chromium-security/marking-http-as-non-secure

https://groups.google.com/a/chromium.org/forum/#!msg/blink-dev/2LXKVWYkOus/gT-ZamfwAKsJ

https://docs.google.com/document/d/1IGYl_rxnqEvzmdAP9AJQYY2i2Uy_sW-cg9QI9ICe-ww/edit

https://w3c.github.io/webappsec/specs/powerfulfeatures/

https://webmasters.googleblog.com/2014/08/https-as-ranking-signal.html

https://www.w3.org/TR/upgrade-insecure-requests/